WAND² | HB-2C | HFIR#

Step-by-step Instruction#

First, one may need to get familiar with the Mantid Workbench framework which will be used for HB-2C powder diffraction data reduction, visualization, and some post-processing. Mantid is commonly used framework for data processing at ORNL and among a few other national facilities around the world. The general user documentation for Mantid framework can be found here. It is available locally, but users are strongly recommended to connect to the analysis cluster and the use the installed version of Mantid framework there. The step-by-step instruction for using Mantid with HB-2C data can be found here.

The data reduction step-by-step instruction can be found in the HB-2C powder diffraction data reduction manual.

For measurement with certain variables scanning through a continuous range, e.g., temperature, measurement time, etc., one can use the event filtering script provided to perform the event filtering operation to slice across the scanning space of the continuous variable. The script is stored in the shared region under the HB-2C directory on analysis cluster. Detailed instructions about using the script can be found here.

Usually, the default script as being used in the instruction above works fine. However, in the case of large number of filtered segments, the overall computational time may reach as long as 1-2 hours, which is not optimal in practice. With this regard, we have created a separate script for performing the event filtering in a parallel manner so the overall computational time could be significantly diminished. The script can be found at

/HFIR/HB2C/shared/WANDscripts/HB2C_filter_parallel.pyand the input section is the same as for the script above.

More Materials#

Refer to the user guide available at the HB-2A instrument website, https://neutrons.ornl.gov/wand/users.

Data Reduction Principle#

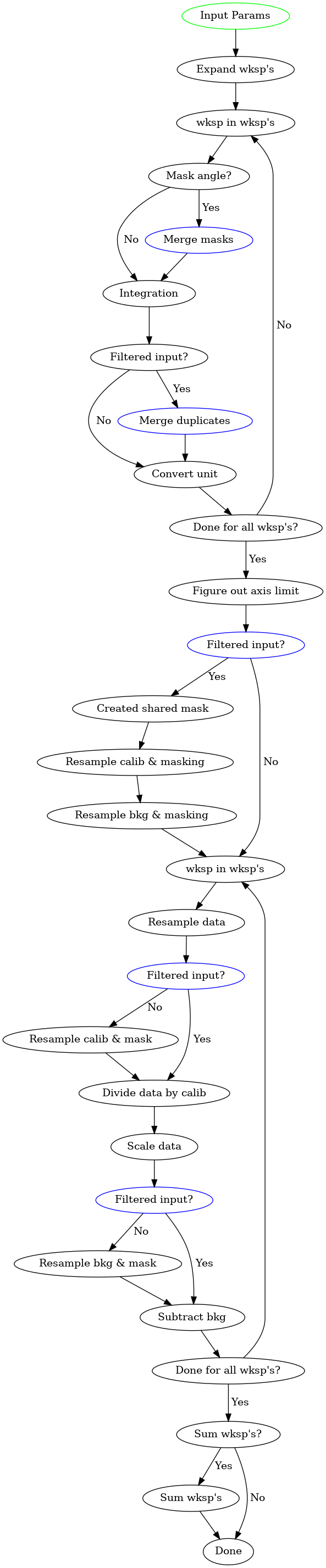

A high level flowchart summarizing the overall powder diffration data reduction workflow for WAND² is presented as below,

Mantid framework is used here for the reduction and one can refer to the Mantid documentation for the information of all the relevant algorithms here.

Several key aspects that are worth noting,

For the

Merge maskstep (see one of the blue nodes above), all the relevant masks were merged, including the manually provided mask (if existing), the already existing mask in the workspace, and the mask to be generated according to the mask angle range (if existing).For the

Merge duplicatesstep (see one of the blue nodes above), adjacent detectors with identical scattering angle value will be merged together.One should notice that there are several spots in the flowchart where the

Filtered inputstatement is appearing. When the processed workspaces were obtained via event filtering, we need some special care to make sure the consistency in the reduced data so that they are directly comparable. Basically, we want to make sure the masks being used for all the filtered workspaces is identical.Given the current implementation of the reduction routine, we do require that the masks for data, calibration (vanadium measurement for normalization purpose), and the background should be identical. This requires one to perform cross masking all the relevant workspaces before feeding them into the data reduction routine.

At the final summing stage (if specified to), if the

MultiplyBySpectraparameter (see the documentation here for details) is set toFalse, the average will be calculated for all the spectra involved.